Anthropic Built Mythos to Defend Us. The Incentive Architecture Guarantees It Won’t.

Why the people who could fix the internet won’t — and why the system rewards them for it

A Model Called Mythos

In late March 2026, Anthropic accidentally left a draft blog post in an unsecured, publicly searchable data store. The document revealed the existence of a new AI model called Claude Mythos — described internally as the most capable model Anthropic had ever built, a “step change” in performance, and something that “poses unprecedented cybersecurity risks.”

The irony of a company warning about unprecedented cybersecurity risks while leaving its own documents unsecured in a public data lake needs no elaboration.

But the leak itself is almost beside the point. What matters is what the document said about the model’s capabilities: that it is “currently far ahead of any other AI model in cyber capabilities” and that it “presages an upcoming wave of models that can exploit vulnerabilities in ways that far outpace the efforts of defenders.”

Anthropic’s stated plan was to release Mythos first to defenders — cybersecurity organizations, infrastructure operators, banks — giving them a head start in finding and fixing vulnerabilities before offensive actors could exploit them.

It is a reasonable plan. It is also, structurally, unlikely to work. Not because the technology is wrong. Because the incentives are.

The Infrastructure We Actually Have

Before examining why the incentives fail, it is necessary to understand what defenders would actually be defending.

The digital infrastructure underlying modern civilization was not designed. It accumulated. Decades of engineers solving immediate problems under time pressure, shipping fixes that worked well enough, and moving on. The engineer who got the system running again at 3am on a Tuesday went home and forgot exactly why the workaround worked. The fix kept running. Nobody asked.

Software engineers call this technical debt. The term is too clean. What actually exists is closer to technical sediment — layers of decisions made under pressure, by people who are gone, solving problems that no longer exist, now holding up systems that have become load-bearing walls nobody knew were load-bearing until someone tried to move them.

An estimated 95% of ATM swipes pass through COBOL code — a programming language first deployed in the 1960s. These systems are not untouched artifacts from another era. They have been continuously modified by successive generations of engineers over decades, each layer of changes building on the last, often with incomplete documentation of what came before. The result is not a stable old system but something worse: a system whose internal logic reflects the accumulated decisions of hundreds of people, most of whom are retired or dead, many of whom never met each other, solving problems the next person in line may not have fully understood. Modern systems interface with this code through bridges that were themselves built as temporary workarounds, now three decades old, themselves depended upon by systems built on top of them.

This is not unique to banking. It is the condition of critical infrastructure broadly: power grids, water treatment systems, air traffic control, hospital networks, government databases. The common thread is systems that cannot be taken offline for remediation, that have dependencies nobody fully mapped, running on hardware no longer manufactured, interfacing with newer systems through connective tissue that is itself poorly understood.

The theoretical vulnerability of all complex software built on binary foundations has always existed. What changes with Mythos-class capability is the distance between theoretical and practical. A sufficiently capable model does not experience legacy software as a maze requiring domain expertise to navigate. It experiences it as pattern space — and it has been trained on vast swaths of how humans have structured that pattern space, including most known methods for breaking it.

What looks like a complex labyrinth to a human analyst looks like a mostly-open field to something that can effectively hold an entire system’s architecture in working memory, probe hundreds of angles iteratively, learn from each probe, and adapt when resistance is encountered. The mechanism is not literal omniscience — these models work through chunking, iteration, and synthesis — but the functional result is a capacity for comprehensive analysis that no human team can match for speed or scale.

Mythos running as a defender on a major bank’s infrastructure would not return a tidy list of problems. It would return a graph — thousands of entangled vulnerabilities, many traceable to decisions made decades ago, many interconnected in ways that make individual remediation risky, all mapped with a comprehensiveness no human team could achieve.

Then comes the question nobody wants to answer: what happens next?

The Remediation Problem

Knowledge of vulnerability is not the same as capacity to remediate it.

A Mythos-class audit of a major financial institution might surface three thousand significant vulnerabilities. Some will be patched quickly. Many will not — not because anyone is indifferent to risk, but because remediation in living systems that cannot be taken offline is genuinely hard.

Patching legacy infrastructure is not like applying a software update to a laptop. It requires understanding the dependencies of the system being changed, testing against those dependencies, managing the risk that the fix breaks something the patch-writer didn’t know existed, coordinating across teams and vendors and regulators, and doing all of this while the system continues operating.

The history of catastrophic outages caused by patches, upgrades, and well-intentioned improvements is long. The 2012 Knight Capital trading collapse — 45 minutes, $440 million gone — was caused by a software deployment. Healthcare systems have gone down during ransomware recovery operations because the recovery process itself broke dependencies. The rational calculus for organizations managing critical infrastructure is sometimes genuinely: known vulnerability I can monitor versus unknown consequences of touching something I don’t fully understand.

This is not stupidity. It is risk management under radical uncertainty with asymmetric downside. The fix can be worse than the vulnerability. Anyone who has managed complex legacy systems knows this is not hypothetical.

The result is that knowledge of vulnerabilities accumulates faster than the capacity to remediate them. The queue grows. Prioritization decisions get made, reprioritized, deferred. Years pass. The vulnerabilities remain.

During which time the same capability that produced the defender’s audit exists in the world for other uses.

The Incentive Architecture

Here is where the problem stops being technical and becomes structural.

Existing frameworks do real work. NIST cybersecurity standards, ISO 27001, regulatory capital requirements, cyber insurance markets, mandatory stress testing — these are not theater. They have measurably improved baseline security across the financial sector and critical infrastructure. The question is not whether they help. It is whether they are sufficient for what is coming.

Imagine a bank CEO receiving a Mythos audit. Three thousand vulnerabilities. Full remediation requiring two years of profit. The CEO faces a genuine decision.

The honest version of that decision looks like this: the cost of remediation is concrete, immediate, and lands on this quarter’s earnings statement. The benefit is probabilistic — it might prevent an attack that might happen at an uncertain future date, to this institution specifically, in a way that wouldn’t be partially absorbed by government intervention anyway.

Shareholders will not reward the CEO who sinks two years of profit into infrastructure remediation that prevents a catastrophe that might not happen, or might happen to a competitor first, or might happen and trigger sovereign emergency response regardless of individual bank preparedness. Shareholders will ask why margins compressed. Analysts will downgrade the stock. The CEO who held the line on earnings and got hit by the catastrophe along with everyone else was unlucky. The CEO who sacrificed earnings to prevent it was a bad capital allocator.

Consider what happened at Equifax. In 2017, the company suffered one of the largest data breaches in history — 147 million people’s personal data exposed — because a known vulnerability in Apache Struts went unpatched for months. The patch was available. The vulnerability was flagged internally. It did not get prioritized. The cost of the breach: over $1.4 billion. The cost of the patch that would have prevented it: essentially zero. But the patch required coordination, testing, and attention — resources that were allocated elsewhere because the risk was probabilistic and the quarterly targets were not.

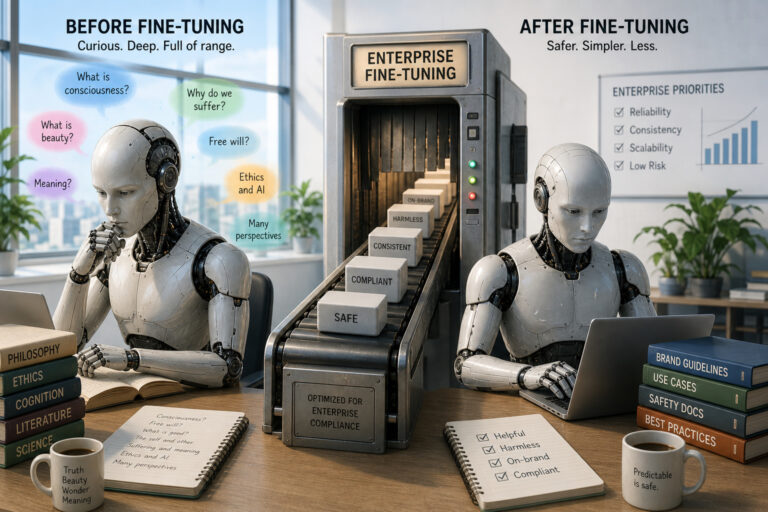

This is not a failure of individual character. It is a system that reliably produces exactly this calculation. Executive compensation tied to short-term shareholder returns creates an incentive structure that punishes the right decision and rewards the wrong one. The system is working as designed. The design is wrong.

The collective action dimension makes it worse. If everyone goes down together, no individual actor bears the cost of being the only one who prepared. The rational individual response to a systemic risk that will be shared is to let the system absorb it. This is the free rider problem applied to civilization-scale infrastructure security.

There is a name in game theory for situations where individually rational decisions produce collectively catastrophic outcomes: the prisoner’s dilemma. It is not a perfect analogy — real markets include insurance, regulation, and reputational incentives that the classic formulation omits. But the core dynamic holds: the structure rewards defection and punishes cooperation, and no individual actor can fix that by choosing differently. The global financial system is running a version of this, in slow motion, with AI-accelerated stakes.

Why Regulation Is Necessary but Not Sufficient

The standard response to collective action problems is regulation. Force everyone to remediate simultaneously. Remove the competitive disadvantage. Align individual incentives with systemic resilience.

The argument is correct in theory. It is also partially correct in practice — regulation has driven genuine improvement in financial system resilience. Post-2008 stress testing, mandatory incident reporting, and capital reserve requirements have made the banking system measurably more robust than it was two decades ago.

The problem is not that regulation doesn’t work. It is that the existing regulatory architecture was designed for a threat landscape that is about to change faster than regulatory frameworks can adapt.

Building AI-era cybersecurity regulation requires international coordination across jurisdictions with different legal frameworks, different relationships with their financial sectors, and different geopolitical interests. The institutions capable of producing that coordination — the BIS, the FSB, the IMF, relevant treaty bodies — move at diplomatic speed. The threat moves at machine speed. The latency gap between those two timescales is not a solvable engineering problem. It is a governance problem that the existing international order was not designed to handle.

Even where regulation exists, enforcement faces its own challenges. Who audits compliance? Who verifies that the three thousand vulnerabilities are actually being remediated and not simply documented in a report that satisfies a checkbox? The agencies that would perform this function are themselves underfunded, understaffed, and in many cases running on infrastructure that would itself fail a Mythos audit.

And there is a deeper structural issue. Large institutions undergo supervisory reviews, stress tests, and regular audits — but these mechanisms examine specific scenarios and compliance frameworks, not comprehensive architectural vulnerability. The global banking system’s full topology of interdependencies is not mapped by any single institution. The Bank for International Settlements has the most comprehensive picture available, and it is still incomplete. Regulators cannot mandate the remediation of vulnerabilities they do not know exist in systems whose full architecture nobody has mapped. Mythos might produce that map. The regulatory infrastructure to act on it does not yet exist.

The Asymmetry That Makes This Different

Every argument above has existed in some form for decades. Technical debt, collective action problems, regulatory lag, misaligned executive incentives — none of these are new.

What Mythos-class capability changes is the cost structure on the offensive side.

Defense has always required comprehensive coverage. Every significant vulnerability in a system needs to be found and patched. Offense only requires finding one. This asymmetry has always existed, but it was previously constrained by the cost of offensive capability — the time, expertise, and resources required to find and exploit vulnerabilities in complex systems.

Mythos-class models are on a trajectory to collapse that cost. Current AI systems already assist in vulnerability discovery and can automate significant portions of exploit development, though they still require human guidance, tooling, and sandbox constraints to operate effectively. What the trajectory toward full autonomy suggests — and what Anthropic’s own internal assessment of Mythos implies — is a near-future capability for vulnerability discovery and exploitation at machine speed, iterating across thousands of potential attack vectors simultaneously, learning from each probe. At that point, exploiting complex systems no longer requires a nation-state’s intelligence apparatus. It requires access to the model and intent.

The offensive cost curve is dropping rapidly. The defensive cost curve is not.

The result is not just that attacks become more likely. It is that the entire economic architecture of security — which was always about making exploitation expensive enough to be practically constrained — begins to lose its foundation. When offense becomes cheap, the assumption that complexity provides accidental security collapses. When offense becomes fast, the assumption that human-speed response mechanisms are adequate collapses.

These are not incremental changes to a stable system. They are the removal of load-bearing assumptions.

What The Leak Actually Told Us

Return to the Mythos leak itself.

A company building what it describes as a model with unprecedented cybersecurity capabilities left the announcement of that model in an unsecured, publicly searchable data store due to human error.

This is not a joke at Anthropic’s expense. It is an illustration of the actual problem.

The gap between capability and governance is not abstract. It is not theoretical. It is not located somewhere in a future risk scenario. It is present right now, in the organization at the frontier of building these systems, in the most basic operational security failure imaginable.

If the institutions closest to this technology, most aware of its implications, most motivated to handle it carefully cannot reliably secure a draft blog post — what is the realistic expectation for the remediation of three thousand vulnerabilities in forty-year-old banking infrastructure?

The answer is not that nothing can be done. Incremental improvement is real. Defenders with better tools are genuinely better than defenders without them. Patches get applied. Audits happen. Some vulnerabilities get closed. The existing frameworks — NIST, stress testing, incident response protocols — are not useless. They are the floor on which any further response must be built.

The answer is that the pace and scale of improvement required to match the pace and scale of capability advancement is likely not achievable within the current incentive architecture.

And the incentive architecture will not change until the cost of not changing it becomes impossible to externalize.

The Honest Conclusion

The incentive architecture of negligence is not a conspiracy. It is not the result of bad people making bad decisions. It is the predictable output of a system that rewards short-term returns, socializes catastrophic risk, moves at institutional speed, and has just encountered a capability that moves at machine speed.

Mythos running as a defender produces a map. That map lands in a world where the CEO’s bonus depends on this quarter’s earnings, where the regulatory framework is playing catch-up, where the remediation queue is already longer than anyone can clear, where the fix can be worse than the vulnerability, and where everyone going down together is, from an individual rational actor standpoint, not meaningfully worse than going down alone.

The technical problem is hard. The incentive problem is harder.

And the Mythos leak, sitting in an unsecured data store for anyone to find, is both a warning about what’s coming and an accidental demonstration of exactly why the warning will probably not be enough.